I was currently in a project where we needed to have a multi-tenant Microsoft Sentinel environment. We had multiple Sentinel / Log Analytics workspaces where we needed to do cross queries to look at the datasets which is typically the case with MSSP environments.

When it comes to using Microsoft Sentinel as a multi-tenant solution such as from an MSSP (Managed Security Service Provider) there are some limitations that you need to be aware of, in terms of how you should design your Sentinel service.

First of:

- Incident View in Microsoft Sentinel can also view data from 100 concurrent workspaces

- Cross Workspace Queries can reference 100 concurrent workspaces

- Cross Workspace Analytics rules can reference 100 concurrent workspaces.

That means if we have an analytics rule that we want to run across X number of workspaces such as looking for a new vulnerability that can out, we can only run that analytics rule across hundred workspaces at the same time. Meaning that if we have more workspaces, we need to create more rules. In addition, if we can define up to hundred concurrent workspaces, it with add a lot of delay to the query since it runs the queries sequentially across each of the workspaces.

For instance, running a query across one workspace

Running the same query across three workspaces. These were simple queries that lookup data for the last hour. While I reran this query multiple times the Total CPU was between 7 – 11 MS. Large datasets and multiple workspaces would increase the query time a lot more.

Secondly, the visual investigation will not be available for cross resources. So, the investigation view in Sentinel will not work properly, however, the entities from the incidents will be available.

Also, incidents created by cross workspace analytics rules will only show up in the source workspace and not in the tenant workspace. This is of course common for an MSP environment.

In addition, there is a limit that you can have only 512 analytics rules per workspace, so as an MSP where you might have more than fifty customers, you can only have ten analytics rules per customer if you have a single source workspace. This means that you would need to have multiple source workspaces to store more analytics rules.

So how can you create queries across multiple tenants with Azure Sentinel?

Firstly, you need to deploy Azure Lighthouse within each tenant (written about that here –> Getting started with Azure Lighthouse | Marius Sandbu (msandbu.org)

Then you just need to reference the other workspaces as part of the query.

Example query:

union workspace("workspacename1").SigninLogs, workspace("workspacename2").SigninLogs,

workspace("workspacename3").SigninLogs

This will just reference each workspace and the table Signinlogs within each tenant. Now, these types of queries can become cluttered if you have twenty or more workspaces. So, I recommend saving this type of query as a function this allows you to reference them more easily.

instead of having that large query. For instance, if I save this query as a function called tenants, I can reference that function and run filters directly at the function.

Now if we save this as an analytics rule and it creates an incident, it will not straight away be easy to find which customers this analytics rule was found on. To view this in the incident pane you need to open the info tab for the incident and find the data sources listed further down.

The last issue is automation. If you want to provide cross-tenant automation using Azure Logic Apps Playbooks. Since you would need to have multiple API connections from Sentinel to different Tenants which would be difficult to manage at scale.

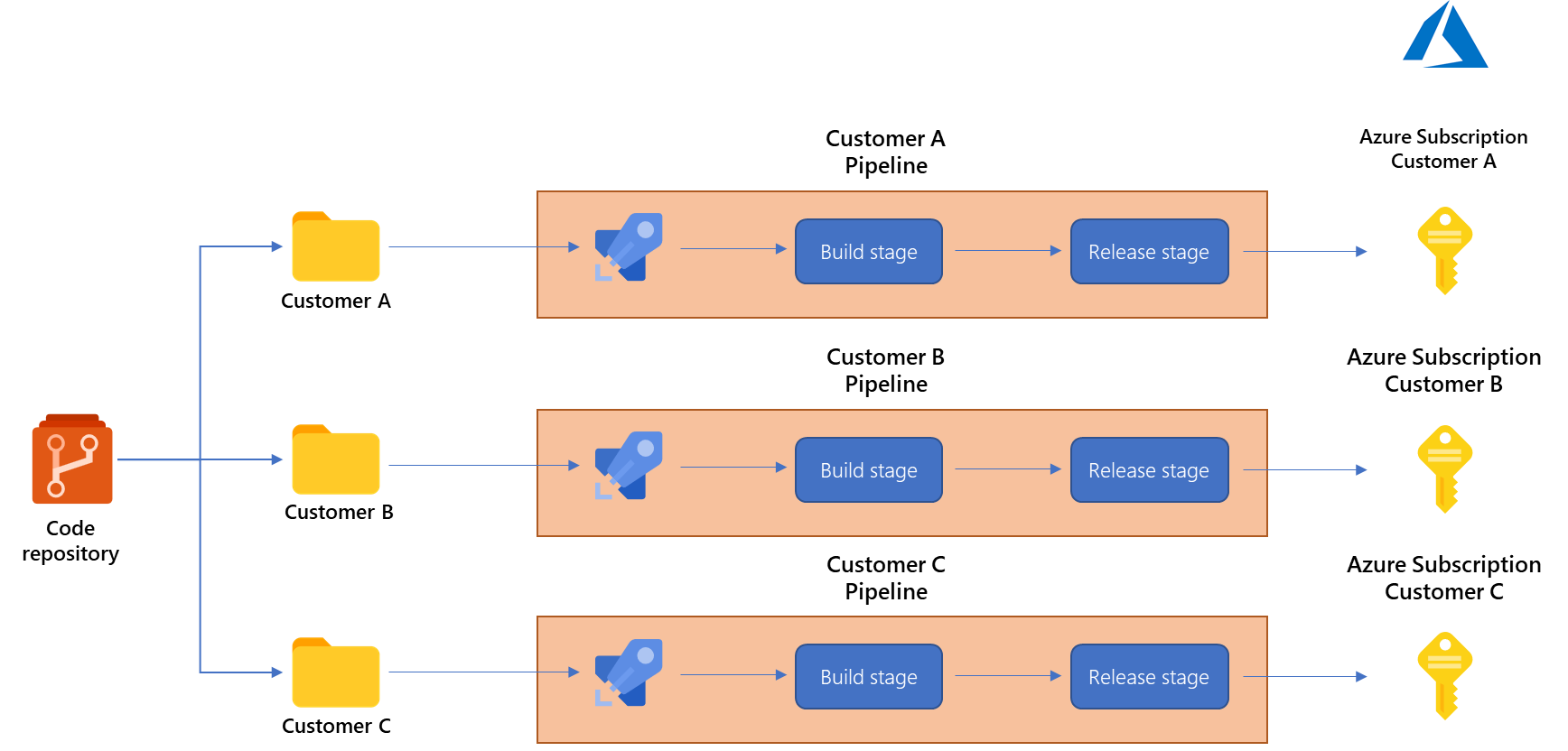

While a multi-tenant Sentinel setup seems like a good idea, a better approach would be to maintain each customer tenant as its own environment, have analytics rules that are deployed within each tenant from a single source, and use features like Azure Lighthouse to view across tenants.

As seen in the example below from Microsoft –> Combining Azure Lighthouse with Microsoft Sentinel’s DevOps capabilities – Microsoft Tech Community