Citrix has announced the move to more NFV and pushing the limits of NetScaler towards up to 100GBps on a single VPX (Yet to see) but since this was a hot topic a week back I decided to benchmark a VPX to see what it can actually deliver in terms of performance over SSL.

Now I love the VPX is a good piece of software and is supported on most hypervisors, so therefore I wanted to give this a try using the Apache benchmarking tool abs (S is for secure)

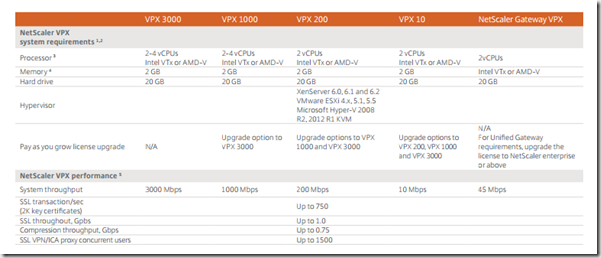

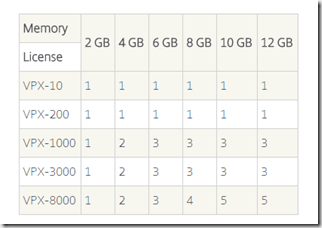

Now from the Citrix datasheet, the VPX has the following attributes

The most important one is that it can handle UP to 750 SSL transactions/sec which contain 2K certs. This was my main objective to test the amount of transactions it could handle to see if it could live up to the claim and if we can do anything to tweak the results.

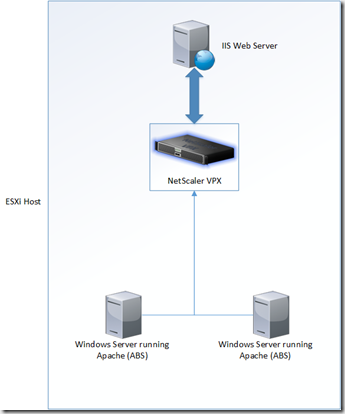

Now when setting this up I decided to do an isolated test within an ESXi host, to make sure I was not losing any perfomance on our crappy network cards. The host itself was not constrained in any matter with CPU/Memory or Storage hence is not included as part of the test.

The enviroment looked something like this (NetScaler VPX 1000)

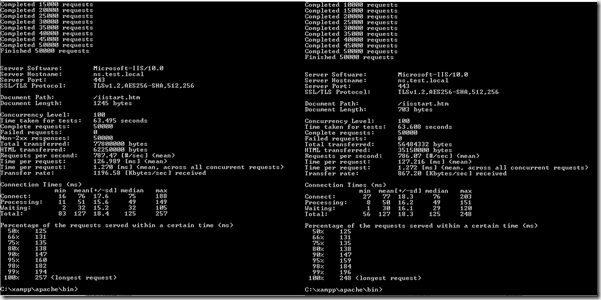

To begin with I started some test against a simple vServer running with a self-signed cert which only has a 512 key against the default setup of NetScaler which has 2 GB ram and 2 vCPU (Where one is used for packet proecssing)

NOTE: During testing I always ran the same test twice to make sure there wasn’t any anomalis.

I did a simple abs.exe –n 50000 (amount of requests) –f TLS1.2 (SSL protocol to use –c 100 (Concurrent) https://host/samlefile.htm from the two host concurrently.

The packet CPU wasn’t really having a hard time with it

And the test finished rather quickly as well, after about 65 seconds on both targets.

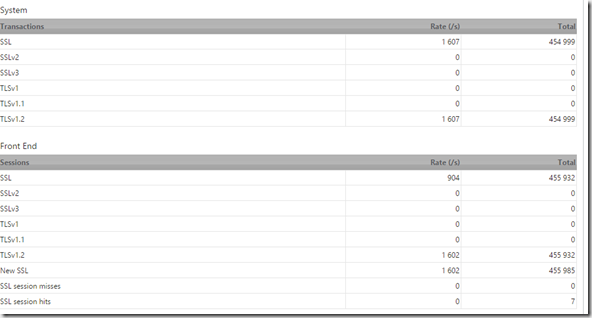

I could also see that the NetScaler was currently processing about 1600 SSL transactions each second

Now then I replaced my cert with a trusted cert which has a 2048 Key size, then it became an entirly other story… The Packet CPU was hitting the roof at 100%

I could only get about 170 transactions pr second on that single vCPU

And the test took almost 600 seconds to complete using the same testing parameters

Now apparently the single packeting CPU is the limit here, therefore according to the packet engine CPU doc on Citrix I could adjust this to 4 vCPU (And have 3 packet processing CPUs) just needed to adjust the RAM as well. Then I did another test using the same parameters.

After adjusting the CPU and memory I could use the stat CPU command to see if my packet engines was available

And I was good to go! after spinning up the test I could see that I was getting a good load balancing process between the different packet engines

I could also see that I was able to increase the amount of transactions SSL/second on the NetScaler as well

And the test took about almost half the time the first one did

Not anywhere near what I want in SSL transactions thou, hence I started to do some modifications, since there had to be some sort of limit somewhere.

- Adjuststed TCP profile (Had no effect, since TCP is not the limitation, got the same result) would have had another result if outside of the LAN)

- Changes Protocol from TLS 1.2 to SSL 3 so see if that had any effect (No change at all)

- Changed the quantum SSL size from 8 to 16 KB (No change)

- Decided to add two more nodes to the abs testing cluster and do 4x paralell tests

This made the CPU engine have a lot of work to do and the SSL transcations went to about 400

Then I tried to adjust the number of concurent connections to a higher number, but still not closer then 400 SSL transactions per second.

So this was part one of SSL performance benchmarking on NetScaler, stay tuned for part two!

this is really interresting, pretty much the same benchmarking tests I have done and results that are showing a similar result. We had some trouble with uploading speed though an SDX stopping at app. 60Mbps (SSL to backend system…) to the backend vCloud connector. When adding resources to the vCloud (more CPU and RAM to the entire cluster…) upload speed doubled with out any change on the Netscaler 🙂