This is a question that has come up a lot of times the last couple of weeks, also with some feedback as comments on some earlier blog posts that I had a couple of years back as well. Therefore, I decided to write a summary of the current state of things to give some feedback on the matter.

Many are today utilizing GPUs in their existing infrastructure either using them as workstations for graphical workloads, such as CAD work or seismic data or are already using solutions today running it on a VDI platform. You might also have GPUs deployed for ML or HPC-based scenarios as well.

Now you are looking into can we shift our GPU workload to Public Cloud and remove the need for our own data center or custom machines?

What options do we have?

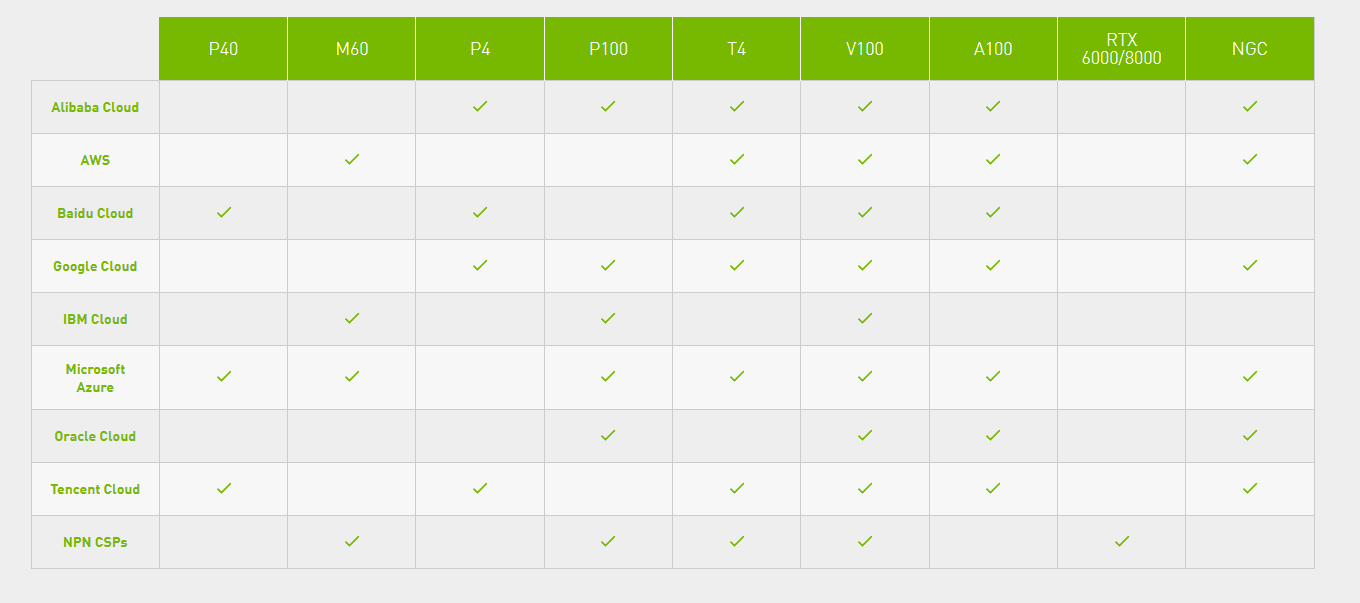

Within Public Cloud, I’ve focused on the main Cloud providers such as AWS, GCP, and Azure and looked at what kind of GPU offerings they have. Luckily, NVIDIA has an overview of the offerings running their GPUs in Public Cloud.

That can be viewed here –> GPU Cloud Computing Solutions from NVIDIA

NOTE: This chart is not 100% accurate, AWS did also December launch new GPU instances powered with NVIDIA A10G Tensor Core GPUs as part of their G5 series machines –> Amazon EC2 G5 Instances | Amazon Web Services

AMD however is still a bit limited when it comes to GPU offerings in them. As of now they only have the Nvv4 instance in Azure which is based upon MXGPU providing the Mi25 card and with Radeon Pro V520 in AWS.

Now if we look at them combined on the different cards, many of them are getting already part of an older generation going back to even 2015! Let’s look at an overview

- M60 (Released in 2015)

- K80 (Released in 2014)

- P40 (Released in 2016)

- P4 (Released in 2016)

- P100 (Released in 2016)

- T4 (Released in 2018)

- V100 (Released in 2018)

- A100 (Released in 2020)

- A10 (Released in 2021)

- Radeon Pro V520 (Released in 2020)

- AMD Mi25 (Released in 2017)

So, what is the difference between them? Google has a great chart comparing some of the cards in terms of what kind of workloads that can be used where.

Looking at NVIDIA they have released a lot of new GPU cards aimed at the virtualization market such as the A10, 16, and A30 that was released in 2021, or with AMD with their new Radeon PRO V620 series cards that were released in November last year. (And is hinted to be only a public cloud offering?!

AMD Radeon™ PRO V620 Graphics | AMD

Now even if AMD is coming with a new GPU card within the Cloud, the issue is that many applications are using CUDA (Which is NVIDIA proprietary) and not available on AMD cards. Therefore, many choose NVIDIA-based cards.

But just to compare the difference between these cards. If we look at the M60 card and the A10.

M60 Card: 4096 CUDA Cores, 16 GB of Video Memory

A10 Card: 9216 CUDA Cores, 24 GB of Video Memory

Also, a significant difference between the M60 and A10 in terms of benchmarking scores –> CUDA Benchmarks – Geekbench Browser

Another issue with the deployment of GPUs in the Public Cloud is that for AWS and Azure is that you have predefined sizes of virtual machine types that you can deploy. In many cases these types of predefined sizes might be good enough, but not always.

This also means that if you need a different size besides those that are supported, it might also mean that you need even more machines to be able to provide those applications that you have with enough GPU capacity.

In GCP you have the option to define custom VM sizes and GPU can be attached to any type of VM. But still, they are limited to older GPU versions.

Is the lack of hardware affecting the cloud vendors?

Now if we look at the current state of things in terms of hardware. It is pretty impossible to get a hold of GPU cards these days. With the issue of the global supply chain has definitely impacted if customers and even cloud providers are able to get a hold of enough quantity of GPUs to be able to build services.

So as of now, the biggest issue is that

1: Cloud providers are also struggling with getting access to the latest within GPU technology and is also affecting what kind of services they can provide.

2: Cloud providers need large quantities if they are to be able to provide these as a service, therefore with the current supply chain issue affects their ability to build these.

So, if you have a business that needs fast access to the newest technology it is as of now easier to buy GPU cards built for your own data center.